|

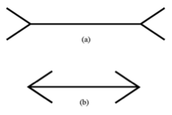

An algorithm is a procedure or formula for solving problems, often based on a set of rules or a sequence of specified actions. A computer program can be viewed as an algorithm. Algorithms underlie many decisions today, from mundane Google searches to more critical terrorism threat assessments. Almost every sector of our society relies on algorithms, and policing is no exception. When data is ‘big’, how capable are we at truly distilling it, making connections, or taking full advantage of its potential? To understand the potential of using algorithms for policing, imagine you are a District Commander. Every day you are tasked with the safety of your officers, and with ensuring the safety and security of the public at large. You have to decide strategies for crime prevention and how to deploy resources accordingly. You regularly need to make profound decisions. The problem is that, while you make these decisions thoughtfully, you don’t write down each decision you make, or track whether it proved correct. You may expect certain factors to predict crime, but you don’t know precisely whether your expectations are accurate most of the time. Meanwhile, your new observations on the job inform subsequent decisions. Perceptions evolve over time as crime patterns change, e.g., such as in response to successful policing strategies, but now you are faced with new information, crime displacement, and new dilemmas. Datasets keep getting bigger, and they may come from multiple sources. The situational contexts keep changing. The 10,000 officers of the LAPD once used saturation patrols in response to crime hot spots; however, this strategy had limited effect over time. To be more precise, the department developed a “laser-like” strategy that engages multiple analysts, officers and commanders in data analysis, resource deployments, and checks for success. In the hot spot areas where resources are deployed, officers are told to “use their training and experience” to figure out what’s causing crime problems to persist. Empowering officers to investigate and suggest “why” spots are attractive for criminal behavior is important and necessary for crime prevention and risk governance. Is it schools, bus stops, or liquor stores? Is the risk narrative connected to interactions of people at places in close proximity to major medical facilities, a military base, retail stores, or social services located in town? How, where and when is all of this connected? There’s no need to guess. Algorithms can be a mechanism for standardizing department-wide initiatives, for objectively analyzing crime patterns, and for testing individual officers’ hypotheses about environmental attractors of criminal behavior. Algorithms such as Risk Terrain Modeling (RTM) help police form crime risk narratives that aid in patrol deployments and place-based interventions. RTMDx software makes the RTM algorithm easy to use and actionable. In fact, it was specifically developed to address these issues that are routinely faced by public safety professionals, and to help officers meet the demands of 21st century policing. Algorithms can add confidence and consistency to information products that aid decision-making. Algorithms, and the software solutions that make them user-friendly and accessible, can be used to prove officers’ expert opinions right, to prevent distractions from inconclusive sentiments, and to get people to act on key insights at the best times and places to improve efficiencies. An algorithm need not be a replacement of your expertise, but rather a service in support of it. It may seem weird to rely on an algorithm to support command-level decision-making of this nature, but the gravity of the outcomes—in cost, crime, and officer safety—is exactly why you could use them. Studies suggest that well-designed algorithms may be far more accurate than human judgment alone. For example, which line is longer in the figure below? Presented with these two lines of equal length, the eye is tricked into seeing one as being longer than the other. Even after it’s proven, with a ruler, that the lines are identical lengths, the illusion persists. If perception has the power to overwhelm reality in such a simple case, how susceptible to failure might the critically unchecked judgments of the smartest, most experienced, and best of any one of us actually be? When data is ‘big’, how capable are we at truly distilling it, making connections, or taking full advantage of its potential? Which line is longer? Seeking to avoid egregious errors in the interpretation of data is a key part of decision-making. Yet, uncertainties always exist. The job of decision makers, then, is not to always be right, but to figure out the odds in any decision they have to make and play the odds well. Ultimately, policing depends on how well commanders and patrol officers assess the odds, or the risks, of crime threats before making decisions about prevention and response. The odds of achieving repeatedly accurate crime predictions matters greatly for target area selections and resource allocations, and these can be improved through algorithms. An algorithm need not be a replacement of your expertise, but rather a service in support of it. A spatial risk assessment algorithm such as RTM is designed to aid thoughtful and experienced decision-makers. It brings multiple sources of data together by connecting them to the environments people live, work, and behave in. It offers insights about places and events in order to add context to data. RTMDx software utilizes RTM as its ‘engine’ to support policing strategies and maximize the role of professional judgments to solve crime problems. Ultimately, it’s the police commanders acting on the recommendations of RTMDx reports that is the most important part of the process. Even the most data-driven, artificially intelligent software must embrace the human element. It should never propose to replace it. Algorithms and related software programs present many opportunities for policing. Risk assessments for crime, especially, demand that outputs from decision support software be considered within the context of various other pieces of information so that judgments can be made about managing crime risks with the greatest odds of sustained success. Many examples exist of commanders imparting insights from RTM into policing actions focused at high-risk places throughout their jurisdictions. These jurisdictions have seen many benefits, including lower crime rates and improved community relations, compared to the status quo (e.g. see www.rtmworks.com). Before using decision support software in your jurisdiction, consider what your goals are in adopting it. Certain goals will be consistent across jurisdictions, such as reducing crime or efficiently deploying resources. But other goals will be specific to a jurisdiction; they should be made by stakeholders within your jurisdiction and should not be delegated to a software company’s sales representatives. Goals may include aspects of evidence-based practice, transparency, actionable data, collaborative problem solving, coordination among agencies, community engagement, community relations, performance measures, or saving money. These goals should be set up-front. Adopt or procure decision support software that is well grounded in research evidence, customer satisfaction and meaningful outcomes. Some software currently on the market under the category of ‘predictive policing’ can vary significantly in terms of quality or value, and in terms of the reliability and accuracy of algorithms used. Scrutinize sensibly, particularly software programs that have been shown to reinforce bias (e.g. see mic.com article). Some algorithms just serve to cover more aggressive and less constructive policing practices. Decision support software worth their salt should inform actions to achieve your up-front goals and to change minds and actions as the contextual dynamics of crime change (see also Tips For Crime Forecasting blog post). Judge your options for decision support software against your jurisdiction’s goals, and with regard to the value-added to decision-making and operational practices. Algorithms and related software programs present many opportunities for policing. It’s reasonable for police agencies to embrace them to support command-level decision-making, but not with eyes wide shut. *This essay was heavily inspired by the commentary of Adam Neufeld, “In Defense of Risk Assessment Tools”

Here is a basic framework for arming police leaders with the tools and resources they’ll need in the 21st century to prevent crime and achieve justice on multiple fronts:

The 21st century demands a change in the culture and mindset of policing, as much as, if not more than, technological upgrades. Rear Admiral Grace Hopper suggested that perhaps the most dangerous phrase in the American language is “we’ve always done it this way”. This cannot be the way of policing. |

Archives

October 2017

Categories |

RSS Feed

RSS Feed